Top 5 Apps of A.I and machine learning in Robotics

About Post

What is robotics?

Robotics is a branch of technology that deals with physical robots. Robots are programmable machines that are usually able to carry out a series of actions autonomously, or semi-autonomously. In my point of view, there are three important factors which constitute a robot:

- Robots interact with the physical world through sensors and actuators.

- Robots are programmable.

- Robots are usually autonomous or semi-autonomous.

I say that robots are usually autonomous because some robots aren't. Some people say that a robot must be able to "think" and make decisions. However, there is no standard definition of "robot thinking" Requiring a robot to "think" suggests that it has some level of artificial intelligence and machine learning but the many non-intelligent robots that exist show that thinking cannot be a requirement for a robot. However you choose to define a robot, robotics involves designing, building and programming physical robots which are able to interact with the physical world. Only a small part of robotics involves artificial intelligence.

What is artificial intelligence and machine learning?

Artificial intelligence (AI) is a branch of computer wold. It involves developing computer programs to complete tasks that would otherwise require human intelligence. AI algorithms can tackle learning, perception, problem-solving, language-understanding and/or logical reasoning. Such an example: AI algorithms are used in Google searches, Facebook recommendation engine, and GPS route finders and much more...

You may think how much of a place is there for machine learning in robotics? The answer can be a limited portion of developments in robotics can be credited to the uses & development of machine learning. These days growing number of businesses worldwide are using transformative capabilities of machine learning, mainly when applied to robotic systems in the place of work. In recent years, the capacity of machine learning to improve efficiency in various fields such as pick & place operations, drone systems, manufacturing assembly, and quality control.

There is a chance where some of the scientists or research debate whether a definition can be relative or depend based on the context like the concept of privacy. It might be a better approach as more & more rules and regulations are created around their utilization in varying contexts.

By considering this situation, I can undoubtedly say that these various types of machines are a class of mobile robots. Robots, specially designed for a set of behaviors in a plethora of environments, their bodies & physical abilities, will replicate the best in shape for those characteristics. There is an exception for the robots that provide medical service for humans, and possibly service robots that are meant to set up a more personal & humanized relationship.

According to the report released by the Evans Data Corporation Global Development, robotics and A.I machine learning is the top choice for the developers for 2020, with 56.4% of participants stating that they are developing robotics apps and in which 24.7% developers are using machine learning in their projects.

Continue reading! Now, its time to look at the main story of the article.

01. Automatic translation

It is an uncomplicated concept that everyone can easily understand. Machine learning can be used to translate text into another language instantaneously. Apart from the above, it can also be done the same thing with text on images. When it comes to the text, the algorithm can learn about how words in shape together and translate more precisely. When it comes to images, the neural network identifies letters from the picture, pulls them into text, and then does the translation before placing them back into the image.

02. Computer vision

Though computer vision is much related to what we are taking, there is some discussion going on is machine/robot vision is the right term when compared to computer vision because robot vision involves more than computer algorithms. Robot vision so much strongly linked to machine vision that it can be given credit for the emergency of an automatic inspection system and robot guidance.

The small difference between two may be in kinematics as applied to robot vision that encompasses orientation frame calibration and a robot’s ability to affect its environment physically. The information available on the web has propelled advances in computer vision that, in turn has helped further machine learning-based structured prediction learning techniques.

Now let us see a simple example that is anomaly detection with unsupervised learning like developing systems competent of discovering and assessing faults in silicon wafers with the help of convolutional neural networks like engineered by researchers at the Biomimetic Robotics & machine learning lab. Extrasensory technologies such as lidar, radar, and ultrasound, like those from Nvidia, are also driving the development of 360-degree vision-based systems for drones and autonomous vehicles.

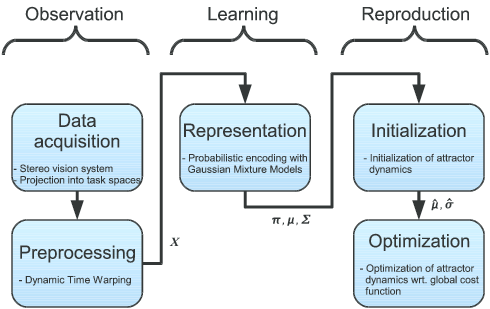

03. Imitation learning

Imitation learning is something that is very much similar to observational learning. It is the behavior exhibited by humans do as infants and toddlers, and it comes under the category of reinforcement learning. It was posited that this kind of learning could be utilized in humanoid robots as far back as 1999.

These days, Imitation learning became an integral part of field robotics industries such as agriculture, construction, military, search & security, and many more. In these types of situations, manually programming robotic solutions is much more challenging to use...

Instead, collaborative methods such as programming by demonstration are used in conjunction with machine learning to instruct programming in the field.

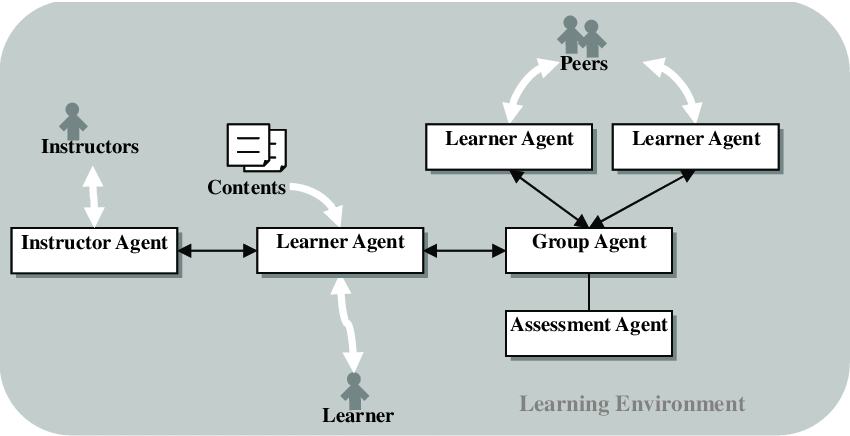

04. Multi-agent Learning

Negotiation and coordination are the significant components of multi-agent learning that involve machine learning-based robots that can acclimatize to a changing landscape of other agents/robots and find equilibrium strategies. Examples of multi-agent learning :

- No-regret learning tools.

- Market-based distributed control systems

Robots combined to build a better and more inclusive learning model than could be done with a single robot depending on the concept of exploring a building, its room layouts, and autonomously edifice a knowledge base. Each robot will develop its catalog them combines with other robot’s information/data sets; the distributed algorithm outperformed the standard algorithm in creating this knowledge foundation.

This type of machine learning approach enables robots to compare datasets or catalogs, reinforce mutual observations & correct omissions. And undoubtedly, it will play a near-future role in several robotic applications, including airborne vehicles and multiple autonomous lands.

05. Assistive and medical technologies

An assistive robot is a device that can brain, process information, and execute actions that can help people with disabilities & seniors. And smart assistive technologies also exist for ordinary people or users like driver assistance tools. Movement robots give you a therapeutic or diagnostic benefit. Both of the technologies mentioned above are mostly restrained to labs... because they are costly for most of the hospitals in the world.

The obstacles are more complicated then you imagine, even though smart assistive robots make adjustments based on the user requirements that need partial autonomy. Compared to other industries, the healthcare industry is taking advantage of machine learning methodologies and applying to robotics...

Conclusion

Most of the organizations around the globe are using AI opportunity landscape research to gain data-backed confidence in their AI plannings. And they use it to select AI initiatives most likely to deliver a return on investment. From natural language processing to computer vision & from robotics to robotic process automation, how you can tie together AI to gain a spirited advantage. If you are also interested to take the challenge of A.I machine learning in robotics. Please be in touch, will make it happen...

Have a great end of the weekend.

Be first to comment it...